IN Brief:

- A research team in São Paulo has validated a non-invasive machine vision system for measuring broiler feeding biomechanics in real time.

- The setup combines an Allied Vision EoSens high-speed camera with a YOLOv8 and SAM image-analysis pipeline running at 300 frames per second.

- The work links feed particle size directly to beak movement and ingestion efficiency, strengthening the case for data-driven poultry feeding control.

Researchers at Paulista University in São Paulo have published a machine vision framework designed to measure broiler feeding biomechanics in real time, using high-speed video and AI-based image segmentation to convert beak movement into quantifiable production data. The study, published in Applied System Innovation, sets out a non-invasive route to monitoring how birds interact with feed at a much finer level than conventional flock metrics allow.

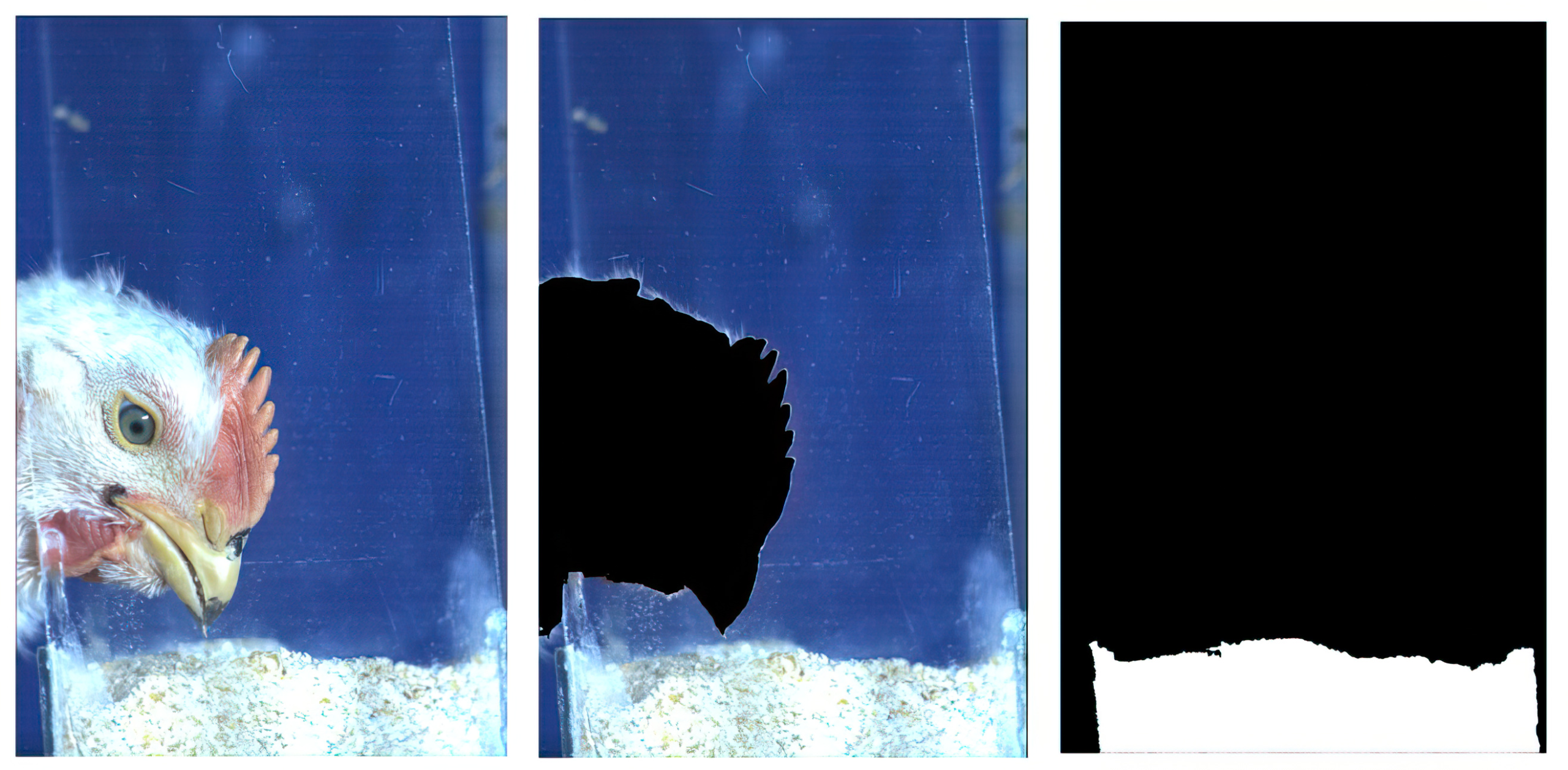

The imaging setup used an Allied Vision EoSens CoaXPress high-speed camera with a Nikon 50 mm f/1.4 lens, positioned 1.0 m to 1.5 m from the feeder to capture lateral views of the birds. Lighting was standardised with a 500 W, 6500 K LED source, and image capture ran at 300 frames per second so that rapid beak motions could be resolved frame by frame. The system was validated using nine broilers across three growth stages.

Raw footage was processed through a three-stage pipeline built in Python using OpenCV, PyTorch, YOLOv8 object detection, and Meta’s Segment Anything Model for segmentation. The researchers reported precision of 0.95 and recall of 0.91, indicating that the system could track anatomical movement reliably enough for automated phenotyping rather than manual annotation.

The study’s main process finding was that feed granulometry changed the mechanics of feeding in measurable ways. Larger particles produced greater beak gape and displacement, while also improving ingestion efficiency. The paper reports an effort ratio of 0.6 for pellets compared with 3.0 for mash, giving the system a direct way to quantify how feed structure alters the work birds perform at the feeder.

That moves the work beyond observation and into control architecture. The authors frame the platform as a potential sensor layer for precision livestock farming, with dual use in production monitoring and welfare assessment. Future work will focus on edge AI deployment and commercial-barn testing, where variable lighting, denser stocking, and occlusion would place greater demands on the model.